How Lidar Enables Autonomous Vehicles to Operate Safely

Situational awareness is essential to good driving. To navigate vehicles to a chosen destination, drivers need to know their locations and continuously observe the surroundings. These observations allow a driver to take actions instinctively, such as accelerate or brake, change lanes, merge onto the highway and maneuver around obstacles and objects.

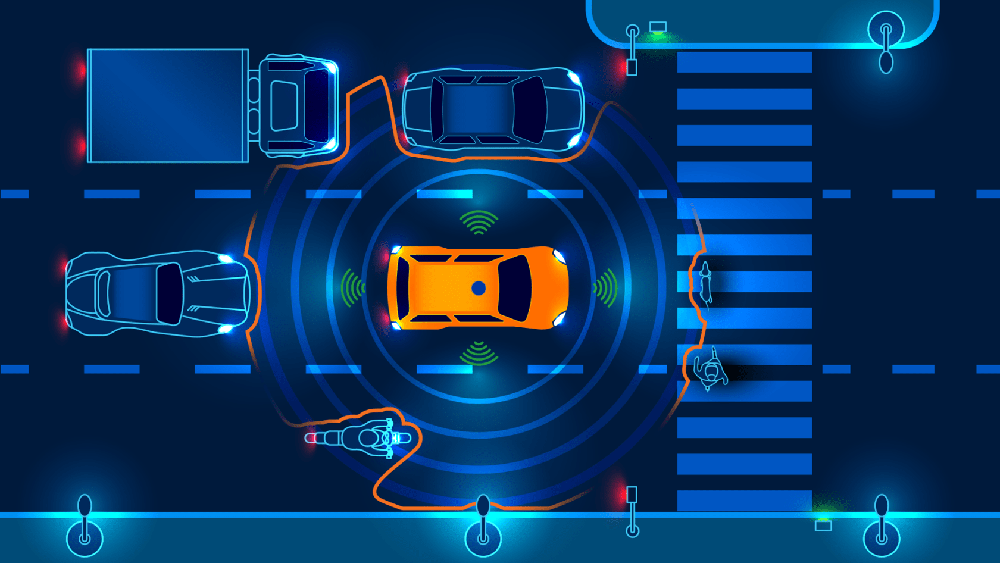

Autonomous vehicles (AVs) work in much the same way, except they use sensor and GPS technologies to perceive the environment and plan a path to the desired destination. These technologies work together to establish where the car is located and the correct route to take. They continuously determine what is going on around the vehicle, locating the position of people and objects near it and assessing the speed and direction of their movements.

This steady stream of information is fed into the vehicle’s onboard computer system, which determines the safest way to navigate within its surroundings. To better understand how sensor technologies in autonomous cars work, let’s examine how these vehicles perceive their location and environment to identify and avoid objects in their pathways.

Precise Measurements of Location and Surroundings

Sensor technologies provide information about the surrounding environment to the vehicle’s computer system, allowing the vehicle to move safely in our three-dimensional world. These sensors gather data that describe a car’s changes in position and orientation.

Autonomous vehicles utilize high-definition maps that are updated in real time to guide the car’s navigation system. This updating is necessary because the conditions of our roadways are not static. Congestion, accidents and construction complicate real-life movement on streets and highways. On-vehicle sensing technologies, such as lidar, cameras and radar, perceive the environment in real time to provide accurate data of these ever-changing roadway situations.

The real-time maps that these sensors produce are often highly detailed, including road lanes, pavement edges, shoulders, dividers and other critical information. These maps include additional information, such as the locations of streetlights, utility poles and traffic signs. The vehicle must be aware of each of these features to navigate the roadway safely.

Sensing technologies address other critical driving requirements. For instance, some lidar sensors enable autonomous vehicles to have a 360-degree view so the entire environment around the vehicle can be seen while operating. Having a wide field of view is particularly important in navigating complicated situations, such as a high-speed merge onto a highway.

Detecting and Avoiding Objects

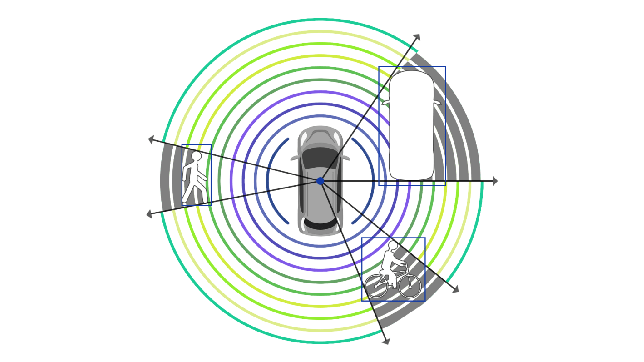

Sensor technologies provide onboard computers with the data they need to detect and identify objects such as vehicles, bicyclists, animals and pedestrians. This data also allows the vehicle’s computer to measure these objects’ locations, speeds and trajectories.

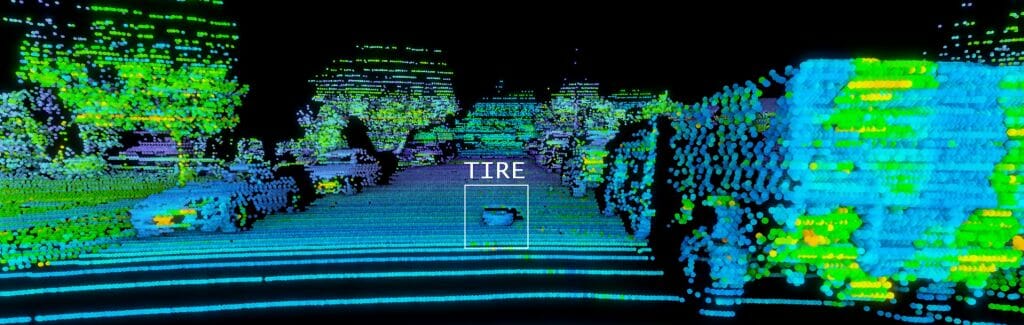

A useful example of object detection and avoidance in autonomous vehicle testing is a dangerous tire fragment on the freeway. Tire fragments are not usually large enough to spot easily from a long distance and they are often the same color as the road surface. AV sensor technology must have high enough resolution to detect accurately the fragment’s location on the roadway. This requires distinguishing the tire from the asphalt and determining that it is a stationary object, rather than something like a small, moving animal.

In this situation, the vehicle not only needs to detect the object but also classify it as a tire fragment which must be avoided. Then the vehicle must determine the right course of action, such as to change lanes to avoid the tire fragment while not hitting another vehicle or object. To give the vehicle adequate time to change its path and speed, these steps must all happen in less than a second. These decisions made by the vehicle’s onboard computer depend on accurate data provided by the vehicle’s sensors.

A Closer Look at Sensor Technologies

Sensor technologies perceive a car’s environment and provide the onboard map with rich information about current roadway conditions. To build redundancy into self-driving systems, automakers utilize an array of sensors, including lidar, radar and cameras.

Lidar is a core sensor technology for autonomous vehicles. Lidar sensors reflect light off surrounding objects at a high rate, with some lidar sensors producing millions of laser pulses each second. By measuring the time required for each pulse to “bounce off” of an object and return to the sensor and multiplying this time by the speed of light, the distance of the object can be calculated. Gathering this distance data at an extremely high rate produces a “point cloud,” or a 3D representation of the sensor’s surroundings, which localizes objects and even the vehicle’s own position within centimeters.

Radar has long-range detection capabilities and can track the speed of other vehicles on the road. However, radar is not equipped with the resolution or accuracy to provide the detailed, precise information supplied by lidar.

Cameras can identify colors and fonts, so they are capable of reading traffic signals, road signs and lane markings. However, unlike lidar, cameras rely on ambient light conditions to operate and are hindered in low light conditions and when hit with direct light. In contrast, because lidar produces its own light source, it can perform well whether it is day or night.

How Lidar Technology Enables Safe, Autonomous Operation

There are multiple technology approaches being advanced by lidar suppliers. In each of these approaches, the same fundamental performance metrics apply to determine if a lidar system can enable a fully autonomous car to operate successfully.

The top performance features to use in looking at lidar technologies are field of view, range and resolution. These are the capabilities needed to guide an autonomous vehicle reliably and safely through the complex set of driving circumstances that will be faced on the road.

Let’s review each feature and examine how it impacts an autonomous vehicle.

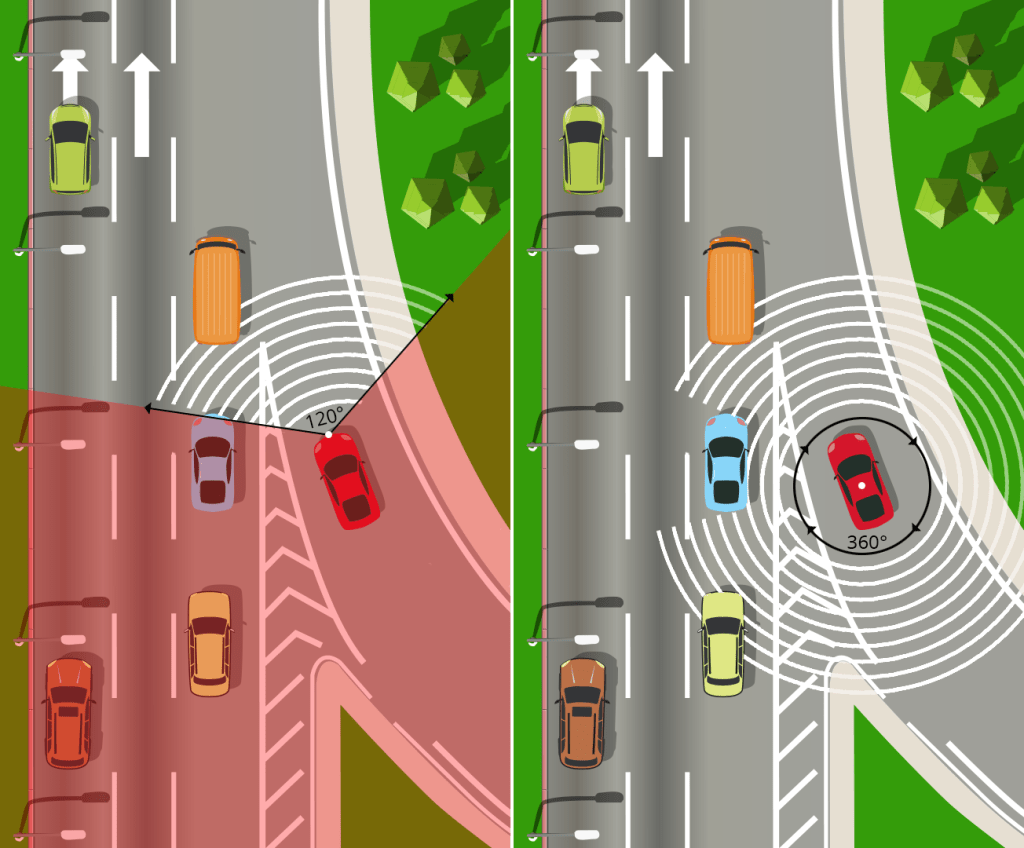

Field of View. It is widely accepted that a 360-degree horizontal field of view – something not possible for a human driver – is optimal for safe operation of autonomous vehicles. Having a wide horizontal field of view is particularly important in navigating the situations that occur in everyday driving.

For instance, consider the scenario of performing a high-speed merge onto a highway. The maneuver requires a view diagonally behind the autonomous vehicle to see if another car is coming in the adjacent lane. This also requires a view roughly perpendicular to where the vehicle is currently traveling to assess cars in the adjacent lane and confirm there is room to merge.

Throughout this process, the vehicle must look forward so it can negotiate traffic ahead of it. For these reasons, a narrow field of view would be insufficient for the vehicle to safely execute the merge maneuver. Therefore, lidar sensors that rotate are optimal for these applications because one sensor is capable of capturing a full 360-degree view. In contrast, if an autonomous vehicle employs sensors with a more limited horizontal field of view, then more sensors are required and the vehicle’s computer system must then stitch together the data collected by these various sensors.

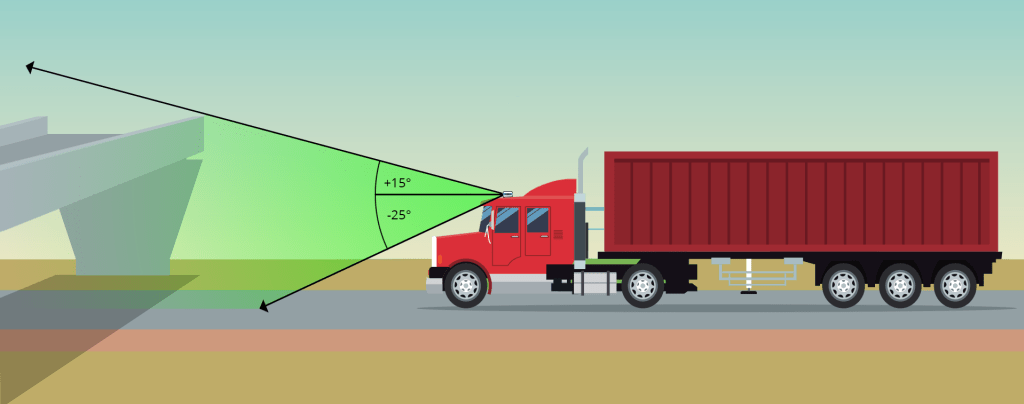

Vertical field of view is another area where it is important that lidar capabilities match real-life driving needs. Lidar needs to see the road to recognize the drivable area, avoid objects and debris, stay in its lane, and change lanes or turn at intersections when needed. Autonomous vehicles also need lidar beams that point high enough to detect tall objects, road signs and overhangs, as well as navigating up or down slopes.

Range. Autonomous vehicles need to see as far ahead as possible to optimize safety. At highway speeds, a range of 300 meters allows the vehicle the time it needs to react to changing road conditions and surroundings. Slower, non-highway speeds allow for sensors with shorter range, but vehicles still need to react quickly to unexpected events on the roadway, such as a person focused on a mobile phone stepping onto the street from between two cars, an animal crossing the road, an object falling from a truck, and debris ahead in the road. In each of these situations, onboard sensors need sufficient range to give the vehicle adequate time to detect the person or object, classify what it is, determine whether and how it is moving, and then take steps to avoid it while not hitting another car or object.

Another factor connected to range is reflectivity. Reflectivity refers to an object’s propensity to reflect light back to the sensor. Lighter colored objects reflect more light than darker objects. While many sensors are able to detect objects with high reflectivity at long range, far fewer are able to detect low reflectivity objects at range, which is needed to be highway safe.

Resolution. High-resolution lidar is critical for object detection and collision avoidance at all speeds. Finer resolution allows a sensor to more accurately determine the size, shape and location of objects. This finer resolution outperforms even high-resolution radar and provides the vehicle with the clearest possible vision of the roadway.

To examine the importance of resolution, consider the above example of a tire fragment in the road. The lidar system needs to be able to not only detect the object but also recognize what it is. This is not an inconsequential task given that it requires detecting a dark object on a dark surface, so a sensor with finer resolution increases the vehicle’s ability to accurately detect and classify the object.

To aid the process of responding to roadway events, unlike cameras, lidar provides 3D images of the surroundings with precise measurements of how far away objects are from the vehicle.

Lidar: Essential to Operating Autonomous Cars Safely

Lidar sensors produce millions of data points per second, which can enable precise, reliable navigation in real time to detect objects, vehicles and people that might pose a collision threat. Lidar can help autonomous vehicles navigate roadways at various speeds, traveling in a range of light and weather conditions such as rain, sleet and snow. Equipped with lidar, autonomous vehicles can safely and efficiently navigate in unfamiliar and dynamic environments for optimal safety.

Author:

Mircea Gradu, PhD

Senior Vice President Automotive Programs

Velodyne Lidar

Mircea Gradu, PhD, is Senior Vice President of Automotive Programs at Velodyne Lidar, responsible for the development and manufacturing of world-class products compliant with international quality standards and satisfying customer needs. Mircea brings over 25 years of experience in the automotive and commercial vehicle industry, which includes deep technical knowledge of design, development, manufacturing, safety and cybersecurity.

Published in Telematics Wire