Towards Seamless Edge Computing in Connected Vehicles

ABSTRACT

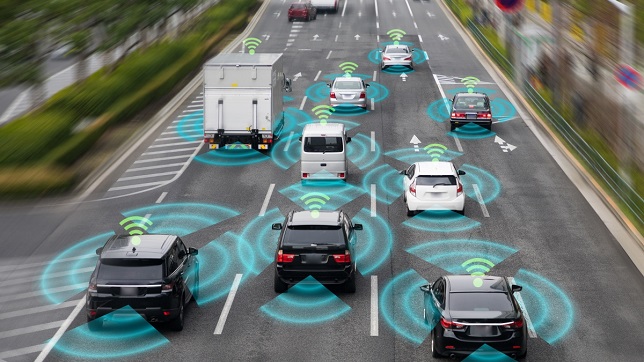

Around the world, the share of vehicles with in-built connectivity is projected to increase from about 48% in 2020 to about 96% in 2030 with connected vehicle systems playing an important role in advanced features and functions in new vehicle launches. Recent advances on the Internet of Things (IoT), the Internet of Vehicles (IoV), and 5G networks have enabled the offering of value-added services in real-time that were hitherto difficult to accomplish. 5G networks supplemented with Multiaccess Edge Computing (MEC), Network slicing, and Task offloading enable connected vehicles to offer resource-intensive services and computing capability. Demand for good quality of service faces the dual challenges of the congestion of wireless networks and insufficient computing resources of edge servers in IoT, with the additional challenge of hi-speed vehicle movement in IoV. A potential solution incorporates the virtualization of an IoT/IoV platform with minimum functions to support specific IoT/IoV services and host the instance in an edge node. This architecture ensures that the network traffic for the end-user near the edge node need not traverse back to the cloud while the instance at the MEC node and the network slice located at the edge node provides the service. This assures low latency besides providing efficient management of IoT/IoV services at the edge node. Containerization is a lightweight virtualization solution for this network architecture because containers enable application and service orchestration and play a vital role to deal with Platform-as-a-Service (PaaS) clouds. Additionally, containers with their relatively smaller size and increased flexibility offer benefit over traditional virtual machines in the cloud. In the edge-cloud-enabled IoV architecture with virtualization using containers, vehicles requiring increased computation and large resources will be directed to communicate with the nearest edge node or a roadside unit (RSU), and the concepts of network slicing (NS), task offloading, and load balancing is applied for optimal resource utilization. Several simulations and experiments using sliced IoT/IoV functions in the MEC showed double the transmission time of the conventional cloud-based IoT/IoV platform with almost half the resource utilization.

I. Introduction

The total number of active device connections worldwide has been growing y-o-y with IOT devices showing 2.6X growth from 2015 to 2020 and 2.1X growth estimated between 2020 and 2025, as can be seen in Figure 1 [1]. The connected vehicles are expected to account for about 96% of the total vehicle sales worldwide in 2030 from about 48% share in 2020.

The billions of IoT devices need to be designed and developed and their associated data need to be tested using specific test cases associated with their industries or applications. This is a mammoth task requiring high bandwidth and low latency to support real-time IoT services. The current solution of distributed IoT architecture based on a centralized platform in the cloud will increasingly find it difficult to provide real-time services based on massive data processing and will impede the scaling up of the central IoT platform. Recent research has investigated offering solutions based on new technologies such as multiaccess edge computing (MEC) and Artificial Intelligence/Machine Learning (AI/ML) algorithms for more efficient data management and improved real-time service [2], [3], [4], [5]. The advent of 5G networks and the new network slicing (NS) technology allow catering to multiple IoT service requirements using the existing network infrastructure. The new techniques of Network Function Virtualization (NFV) and Software-Defined Networking (SDN) associated with 5G networks allow the implementation of flexible and scalable network slices on top of a physical network infrastructure. The integration of SDN and NFV enables increased throughput and the optimal use of network resources, which was hitherto impossible even with SDN or NFV applied individually. To realize the benefits of improved throughput and latency through the integration of SDN and NFV with network slicing on physical network infrastructure, the application server must be located at the edge node instead of a far-away location from the central cloud to have reduced round-trip time (RTT) for the messages from IoT devices to the server and back. In addition to having an architecture virtualizing the IoT common service functions and deploying them to an edge node, IoT resources and the associated services must also be delivered at the edge nodes. In scenarios where IoT services are running in the cloud-based IoT platform, despite IoT common service functions supported at the edge nodes, the message/data traffic from IoT sensors still needs to traverse back and forth to the IoT platform, thus increasing the latency and reducing the overall performance efficiency. Hence, it is important for IoT platforms running in the cloud to create and manage multiple virtual IoT services catering only to the necessary common service functions. Also, an important requirement for the edge nodes is that they should handle network slicing capabilities and serve the virtualized IoT service functions in addition to the resources representing IoT data and services.

To completely leverage the advantage of 5G networks, SDN, and NFV, the architecture must be designed to avoid network traffic traversing up and down from an IoT sensor or device to the application in the cloud. The cloud-based IoT platform must be designed to provide a common set of service functions such as registration and data management while edge computing at the edge nodes must cater to local data acquisition from the sensors, data processing, and decision-making. In specific IoT or IoV use cases requiring low latency (< 1 ms) and mission-critical services such as in industrial applications or connected vehicles, even if the networks are deployed closer to the end-users using SDN/NFV, it may not be possible to reduce the round-trip time (RTT) for the messages from an end IoT device or application to the central cloud platform.

In recent research, a novel IoT architecture was proposed with two distinct features; 1) the virtualization of an IoT platform with minimum functions to support specific IoT services and 2) hosting of the IoT instance in an edge node close to the end-user. In this architecture, low latency and high IoT service management at the edge node are assured because the message/data traffic for the end-user need not traverse back and forth to the cloud and the IoT instance provides its service at the edge node which is co-located with the MEC node with network slicing. Studies showed that the data transmission time in the new IoT architecture is halved compared to the conventional cloud-based IoT platform.

Recent developments in Connected Vehicles include the successful adaptation of 5G networks and SDN/NFV to provide Ultra-Reliable Low Latency Communications (URLLC). This is supplemented by Multiaccess Edge Computing (MEC) which enables vehicles to obtain network resources and computing capability in addition to meeting the ever-increasing vehicular service requirements.

In IoV architecture, the MEC servers are typically co-located with Roadside Units (RSUs) to provide computing and storage capabilities at the edge of the vehicular networks. The communications between the vehicles and the RSUs are enabled by small cell networks. An access point (AP) is provided at the local 5G base station (BS) where the edge servers are typically co-located. The vehicles or end-users can access the services through the AP at the BS through the edge server located nearby, which improves the latency for the IoT devices compared to the communication with a remote IoV cloud platform.

Network Slicing in Connected Vehicles

The increased computing power available within modern vehicles helps carry out numerous in-vehicle applications. However, specific requirements such as autonomous driving capabilities and new applications such as dynamic traffic guidance, human-vehicle dynamic interaction, and road/weather-based augmented reality (AR) demand powerful computing capability, massive data storage/transfer/processing, and low latency for faster decision-making. Recent research has provided a new IoT architecture comprising two core concepts:

Figure 2. NS and MEC architecture in a 5G environment, as proposed in 3GPP [6]

network slicing and task offloading. Network slicing divides a physical network into a dedicated and logically divided network instance for providing service to the end-users. The NS and MEC architecture in a 5G environment, as proposed in 3GPP standards, is shown in Figure 2 [6]. Through the Network Slicing (NS) technology that comprises Software Defined Networking (SDN) and Network Function Virtualization (NFV), physical network infrastructure can be shared to provide flexible and dynamic virtual networks that can be tailored to provide specific services or applications. The Network Slicing (NS) adopts network function virtualization (NFV) and enables the division of IoT/IoV services by functionality to offer flexibility and granular details for the execution of functions at the edge nodes. Each network slice is assured of the availability of network resources such as virtualized server resources and virtualized network resources. Network Slicing is visualized as a disparate and self-contained network in the context of connected vehicles as outlined in the 3GPP standards, to provide specific network capabilities akin to a regular, physical network. Each network slice is isolated from other slices to ensure that errors or failures that may occur in a specific network slice do not impact the communication of other network slices. From the vehicle-to-everything (V2X) perspective, NS helps to come out with specific use cases such as cooperative maneuvering, autonomous capabilities, remote driving, and enhanced safety. Some of these test cases have conflicting and diverse requirements related to high-end computing, latency, dynamic data storage and processing, throughput, and reliability. Using NS, one or more network slices can be designed and bundled to support multiple conflicting V2X requirements and concurrently provide Quality of Service (QoS).

Task Offloading in Connected Vehicles

A schematic of dynamic task offloading in vehicular edge computing is shown in Figure 3 [7] which contains multiple network access points around the moving vehicles. As the vehicle moves from the top right corner to the new position in the direction indicated by the arrow, the task units (TUs) are initially offloaded to the edge server numbered 1, close to the initial position of the car. To maintain the Quality of Service (QoS) during the movement of the vehicle, the unfinished TUs need to be offloaded to a new edge server (either 4 or 5 or 6) close to the new position of the car. A similar concept of data offloading integrated with cellular networks and vehicular ad hoc networks is shown in Figure 4 [8].

Recent studies propose the concept of IoT/IoV task offloading along with Network Slicing to provide similar quality of services at the edge nodes by creating IoT/IoV resources that are typically operated in the central cloud platform. As shown in Figure 5 [8], multiple technologies have evolved in V2X communications on task offloading and load reduction in wireless communication networks.

The task offloading is segregated into three categories: 1) through V2V communications, 2) through V2I communications or 3) through V2X communications (a hybrid of multiple methods). Through the deployment of both IoT/IoV service slice and 5G network slice at the same edge node close to the end-users, IoT/IoV functions and services can be provided seamlessly and efficiently with significantly reduced latency time (up to half the earlier value) for data or message transmission. For carrying out increasingly complex and powerful computations requiring large data storage resources in modern vehicular communication networks, edge computing nodes hosted at wireless 5G new generation nodes (gNBs) or roadside units (RSUs) are utilized with network slicing and resource-optimal load balancing concepts duly applied. This concept uses the NFV framework to manage the data, balances the loads between various slices per node, and supports multiple edge computing nodes, resulting in savings of up to 48% in resources.

Edge Computing

In a cyber-physical system (CPS), the edge is likely to be the system to which IoT devices are connected. An infrastructure able to support different kinds of edge applications (given the above definition of the Edge), might be quite complex. A representative complex cloud infrastructure is shown in Figure 6 [3] with heterogeneous varieties of IoT devices, multiple clouds, gateways, mobile base stations, and cloudlets. To realize the benefits of Edge Computing and leverage the ultra-low latency and ultra-high reliability of 5G networks with little impact on security and data privacy, it is imperative to move the physical infrastructure elements closer to where the data needs to be processed. The value chain in edge computing is being transformed into a value network through enhanced connectivity and 5G network availability aided by new applications and services such as Artificial Intelligence (AI), IoT, and IoV, and the demand to provide service and data/messages in real-time with low latency and ultra-high reliability.

A cloud-based architecture for connected vehicles is shown in Figure 7 [9] that combines both wired and wireless communications and presents the integrated cloud services connecting non-transportation units (NTUs), traffic management and control, roadside infrastructure, connected vehicles, and vulnerable road users (VRUs). As the number of connected vehicles increases dramatically in the next decade with an increasing amount of data such as Telematics, Infotainment, Location-based services, etc., it puts enormous strain on the underlying mobile networks, justifying the combination of 5G networks and Multiaccess Edge Computing (MEC). Embedded edge computing in the vehicles is the need of the hour as it provides a framework for an increasing number of connected vehicles and associated large data transfer and processing in the existing networks. For a cost-effective solution, the commercial cloud and the associated hardware and software provide a viable option. The cloud architecture shown in Figure 7 comprises an infrastructure layer, a platform layer, and a software layer [9]. The bottom infrastructure layer provides infrastructure-as-a-service (IaaS) to the cloud users and application developers in the form of server instances (virtual machines) through hardware components besides database resources and operating systems. The middle platform layer provides a worry-free underlying infrastructure and forms the basis for platform-as-a-service (PaaS) by enabling developers to build applications using multiple cloud services including streaming, and non-streaming, computing, and database management. The top software layer provides the software-as-a-service (SaaS) to the developers who can upload the data from NTUs or transportation infrastructure or connected vehicles or VRUs (Figure 7), deploy their applications in the cloud, and monitor/analyze the output from the applications running in the cloud.

Depending upon the needs of connected vehicle applications and the requirements, the cloud-based architecture shown in Figure 7 can operate in real-time or non-real-time. The cloud services must meet the Quality of Service (QoS) agreements through compliance with the computing and latency requirements. Besides, for efficient real-time cloud services of connected vehicles, the components of the architecture must meet the requirements related to real-time cloud computing, real-time data transmission, and data archiving. A reference edge computing platform consisting of a device layer, edge cloud layer, network layer, back-end layer, and application layer is shown in Figure 8 [10].

Real-time Cloud Computing

Real-time cloud computing in a cloud-based connected vehicles application involves multiple activities such as data acquisition from IoT devices, data uploading from the database, data processing, and message/data downloading while meeting the latency requirements. A server-based architecture mandates application developers to establish a cloud server instance to carry out their computing needs. This in turn demands significant expertise, cost, and time for hardware setup and virtual environment configuration. Additionally, this arrangement is not conducive to the dynamic scaling of computing resources to meet the varying demands. To meet these challenges, a new “serverless cloud computing architecture” has emerged that not only provides relief to the application developers from the burden of setting up or configuring the hardware and virtual environment and gives them the opportunity to exclusively focus on application development but also scales up dynamically to allocate resources for application development with no additional requests or intervention from the developers. As serverless architecture is not burdened with the cumbersome, expensive, and time-consuming establishment and maintenance of server instances, it quickly evolved into a cost-effective alternative to server-based computing.

The details of and important differences between server-based and serverless cloud computing are provided next. The cloud infrastructure shown in Figure 9 can be segregated into two types: server-based as shown in Figure 9 (a) or serverless as shown in Figure 9 (b).

Figure 9. Cloud Infrastructure: (a) Server-based and (b) Serverless [9]

Server-based Cloud Computing

For a real-time or a non-real-time cloud computing application, developers need to establish a cloud server instance, in the commercial cloud, to begin with, as shown in Figure 9 (a) [9]. For a given application, the server instance calls for the configuration of dedicated or virtualized hardware (e.g., CPU, storage, memory), operating systems (OS), and software coding platforms (e.g., language environment, compilers, libraries). The Application Programming Interface (API) helps the applications interact with other cloud services, to carry out different functionalities. Though it is possible for the developers to customize the computing capabilities to meet specific demands, they are required to be experts in configuring and maintaining server instances and spend a sizeable amount of time and effort on this. Further, the potential wastage of computing capacity is high in the case of server-based cloud computing if specific applications need fewer resources because it is not scalable.

Serverless Cloud Computing

In the case of serverless cloud computing, as shown in Figure 9 (b) [9], the application developers a) are not required to establish server instances, b) can focus solely on application development, and c) are relieved of the burden of creating, configuring, maintaining, and operating the server instances. This architecture supports multiple programming languages such as Python, .NET, Java, and Node.JS. The applications in serverless cloud computing are typically configured to get activated automatically based on specific triggers such as input data availability, database update service, or actions of other cloud services and are active till the task is completed. Upon task completion, the commercial cloud automatically releases the computing resources, to enable dynamic scaling depending on varying loads. This results in a more efficient and cost-effective cloud architecture with additional savings on time and effort.

Function as a Service (FaaS), also known as serverless computing, allows developers to upload and execute code in the cloud easily without managing servers and by making hardware an abstraction layer in cloud computing [11], [12], [13]. (FaaS) computing enables developers to deploy several short functions with clearly defined tasks and outputs and not worry about deploying and managing software stacks on the cloud. The key characteristics of FaaS include resource elasticity, zero ops, and pay-as-you-use. Serverless cloud computing frees the developers from the responsibility of server maintenance and provides event-driven distributed applications that can use a set of existing cloud services directly from their application such as cloud databases (Firebase or DynamoDB), messaging systems (Google Cloud Pub/Sub), and notification services (Amazon SNS). Serverless computing also enables the execution of custom application code in the background using special cloud functions such as AWS Lambda, Google Cloud Function (GCF), or Microsoft Azure Functions. Compared with IaaS (Infrastructure-as-a-Service), serverless computing offers a higher level of abstraction and hence promises to deliver higher developer productivity and lower operating costs. Functions form the basis of serverless computing focusing on the execution of a specific operation. They are essentially small software programs that usually run independently of any operating system or other execution environment and can be deployed on the cloud infrastructure triggered by an external event such as a) change in a cloud database (uploading or downloading of files), or b) a new message or an action scheduled at a pre-determined time, or c) direct request from the application triggered through API. There could be several short functions running in parallel independent of each other and managed by the cloud provider. Examples of events that trigger the functions can also include an interface request that the cloud provider is managing for the customer for which the function could be written by the developer to handle that certain event.

In serverless computing, functions are hosted in an underlying cloud infrastructure that provides monitoring and logging as well as automatic provisioning of resources such as storage, memory, CPU, and scaling to adjust to varying functional loads. Developers solely focus on providing executable code as mandated by the serverless computing framework and have the flexibility to work with multiple programming languages such as Node.js, Java, and Python that can interface with AWS Lambda and Google Cloud Function, respectively [11], [12]. However, developers are completely at the mercy of the execution environment, underlying operating system, and runtime libraries but have the freedom to upload executable code in the required format and use custom libraries along with the associated package managers. The fundamental difference between functions and Virtual Machines (VMs) in IaaS cloud architecture is that the users using VMs have full control over the operating system (OS) including root access and can customize the execution environment to suit their requirements while developers using functions are not burdened with the cumbersome tasks of configuring, maintaining, and managing server resources. Functions essentially serve individual tasks and are typically short-lived till execution, unlike the long-running, permanent, and stateful services. Hence, functions are more suitable for high throughput computations consisting of fine granular tasks, with serverless computing offering cost-effective solutions compared to VMs [11], [12], [13].

Recently, novel architectural concepts have been proposed that virtualize IoT/IoV common service functions to provide services at the edge nodes close to the end-users. Further, studies indicate the benefit of edge computing through the offering of virtualized IoT services at the same edge node with a 5G network slice to achieve higher throughput and lower latency and reduce RTT by half compared to the earlier concept.

Serverless Computing — Scalability

In a real-world dynamic situation of connected vehicles with peak and lean periods of traffic and changing road and weather conditions, the associated cyber-physical system (CPS) must also scale automatically. Even though serverless cloud computing is known to be automatically scalable, limitations are introduced by the underlying commercial cloud service providers (e.g., AWS, Azure, Google Cloud) who set upper limits on memory usage, limiting the support for a large-scale CPS in real-time. To overcome this, a concept is proposed wherein the functions handling traffic and connected vehicles operate in parallel to improve the throughput so a large amount of data could be handled without compromising on the latency requirements [11], [12].

IoT Function Modulization using microservice and virtualization

One of the first steps toward offering IoT/IoV services through network slicing is the modulization of the IoT/IoV platform. As per current practice, a centralized cloud service deploys almost all the IoT service functions such as device management, registration, and discovery. This poses a challenge for users expecting different types of IoT services, computational resources, and modularized IoT functions at the edge. This can be addressed by dividing the IoT platform into multiple small components to modularize it and offer microservices, which can be deployed, scaled, and tested and improves the efficiency and agility of services and functions. The modulized IoT functions thus provide a flexible and agile development environment by independently deploying software and reducing dependency on the centralized cloud.

Container Technologies

Recent developments have resulted in a container-based architecture where packaged software in containers enables easier deployment to heterogeneous systems as a virtual OS and file system executing on top of the native system [11], [12]. Container technologies enable the code to be portable so it can run properly and independently irrespective of the hardware architecture or operative system structure on which it is running. Containers thus allow developers to test the code on separate development machines and upon successful run can deploy it in the IoT platform to run with other functions. Containers provide multiple advantages including fast start-up time, low overhead, and good isolation because developers are required to build, compile, test, and deploy only once in the virtualized platform. The virtualization layer takes the responsibility of translating the actions inside the container for the underlying system, without burdening the developers. Further, the containers provide an isolated sandbox environment for the programs residing in them. Hence, the developers find it easier to work with containers that can be constrained relatively easily than programs running on the native platform. Also, because a container is essentially an isolated sandbox, any spurious software or malware that may have affected the container may not impact the rest of the applications. In an application or a software stack, we can have an overseeing container orchestration framework that controls multiple containers (sandboxes) running in parallel without one container affecting the other and can easily deploy and manage large container-based software stacks. Though a sandbox running software in containers involves virtualization and isolation, the drawback is it reduces the performance due to additional software overhead, which may not be a hindrance on high-performance computing (HPC) machines but may impact IoT devices with edge computing. Still, containers with virtualization of multiple platforms and services, are a preferred choice among software developers with Docker and WebAssembly (Wasm) finding increasing acceptance by a large SW community to implement microservices. Though Docker is currently the dominant container technology, its shortcomings include large and complicated systems and limitations while running on hardware-constrained IoT devices. WebAssembly containers, though relatively new, appear to be a promising solution for running small lightweight containers and are better suited for hardware-constrained IoT devices in edge computing with simpler runtime. Additionally, many SW developers across the world using 5G networks acknowledge Kubernetes as an open-source container orchestration system for efficient management of operational resources and scalability as per varying load demands [11], [12], [13].

Multiaccess Edge Computing combined with Network Slicing & Task Offloading

As the number of IoT devices has been significantly increasing y-o-y, the European Telecommunications Standards Institute Multiaccess Edge Computing (ETSI MEC) industry specification group (ISG) has been working on standards development, use cases, architecture, and application programming interfaces (APIs) to overcome the latency challenge and assure ultra-high reliability during real-time services in connected vehicles, smart cities, smart factories, etc. ISG is also working on creating an open environment to provide a vendor-neutral MEC framework and defining the MEC APIs for IoT systems.

Figure 10 shows a reference architecture for IoT network slicing and task offloading [14]. As explained earlier, using the concept of network or service slicing, the common service functions of the IoT platform can be modularized into small microservices and deployed at the edge nodes. Here, only the necessary IoT microservices are selected as required to create a virtual IoT platform instance and deploy it towards the edge nodes. This improves the efficiency as each IoT service slice is optimized for a specific use case and provides scalability in providing multiple IoT services as required. The IoT platform in Figure 10 [14] depicts a Resource-Oriented Architecture (ROA) wherein task offloading is carried out through the transfer of necessary IoT resources from the cloud to the edge nodes for executing the requested service.

As shown in Figure 10 [14], there are multiple service requests (Home, Automotive, Drone, Factory, etc.) that may need low latency. The IoT platform checks as to which IoT common service functions are required to satisfy the requests from different IoT devices and accordingly assigns an IoT slice that contains the required micro common IoT service functions. The IoT platform then checks the availability of MEC nodes around the specific IoT device and selects one MEC node to host the instantiated IoT slice. Next, the IoT platform offloads the resources to the MEC node to execute the service requested by the IoT device, thus, fulfilling both IoT network slicing and task offloading.

For the effective realization of MEC and offering of services at the edge nodes, it is imperative to carry out network slicing and task offloading as well as data transfer & processing and containerization of IoT functions at the edge nodes. A task is identified as a set of resources representing physical infrastructure, sensors, RSUs, etc. with associated data. Upon identification of a suitable edge node to serve a sliced IoT platform, task offloading must be carried out to place the relevant resources required to support the service at the edge node. Figure 11 [14] shows a use case of connected vehicles operating in a smart city wherein messages about pedestrians crossing a crosswalk are passed onto the cars reaching the intersection by comparing two scenarios, before and after task offloading. In Figure 11 (a), task A (car A) and task B (pedestrian citizen A) are assumed to be running on the central IoT cloud platform while task C (pedestrian citizen B) is assumed to be running the edge node. Pedestrian citizen B will experience edge-based IoT services. To meet specific latency requirements, it is imperative for the central IoT cloud to offload the tasks from the cloud to the edge gateway, with resource reorientation containing CSE (Common Service Entity), AE (Application Entity), and Container changed to the structure as shown in Figure 11 (b). Now, car A can have faster IoT services than in the earlier case due to reduced latency. Similar use cases for connected vehicles involving microservices at the edge nodes need to be prepared, as per the guidelines given for MEC by ETSI.

IoT Service Slicing based on Microservices

It is generally believed that if the edge nodes have enough CPU and memory resources then the deployment of an IoT service platform that supports all common service functions at the edge nodes is relatively easy. The challenge is in providing granular IoT services at the edge nodes with limited CPU and memory resources using slicing and task offloading based on microservices. The advantages of a microservices-based IoT platform include a) less complexity to reboot the system especially the microservices at the edge nodes in case of unexpected errors, b) no subscription or notification functionalities in hardware for seemingly simple tasks at the edge nodes such as temperature or voltage measurement, and c) no stringent latency requirements for some IoT services such as smart homes [14]. Though different IoT services have different latency and computation requirements they can be operated on the same edge nodes. To ensure the highest Quality of Experience (QoE) to the end-users the IoT service must be designed to dynamically handle the varying resource requirements (latency and computation) at the edge nodes against assigning similar resources for seemingly simple tasks, leading to inefficiencies.

IoT Service with inherent Trust and Security using Blockchain

In the IoT platform offering microservices, in line with the Blockchain framework that uses a decentralized public ledger, the data is stored at the edge nodes on the network in a block structure logically connected to each other based on the hash value. To prevent and protect against security breaches, data forgery, data alterations, and cyberattacks that may compromise data and privacy, the entire network is populated with these data blocks which are copied and shared along with the blockchain system [14]. A MEC framework with network slicing and task offloading may experience multiple security challenges due to a) heterogeneous data transfer across different IoT stakeholders and organizations, b) unauthorized access to private data, and c) data replication or unauthorized publishing of data. The heterogeneous data transfer is handled through transparent and secure collaboration with other servers or applications. The issues of data privacy and duplication or unauthorized publication arise due to the separation of ownership and control of data and the outsourcing of complete or partial responsibility when data gets transferred between edge nodes or the data center in the cloud. Any compromised data poses challenges due to highly changing dynamic situations at the IoT nodes and the openness associated with edge computing, posing serious compromises to data integrity, or allowing hackers to access/modify the data. These issues can be resolved using the blockchain technology that connects MEC instances together with the IoT platform to increase the trust among IoT service slices.

Certain IoT platforms with network slicing and Blockchain support a resource to control access rights which in turn allows storing the authentication key value generated internally to be used for device authentication. Before an IoT platform responds to a service request, it checks the authentication key value for a match with the stored values. However, the IoT platform still needs to verify the reliability of data exchanged among the edge nodes and ensure it is not tampered with or compromised on privacy or published without proper authorization. In this regard, an IoT device that utilizes the services from the edge nodes or data on the IoT device or cloud interface needs to carry out a synchronization mechanism and data blockchain using blockchain. .

As shown in Figure 12, the edge computing architecture can offer more secure and trustworthy services by incorporating blockchain into the edge nodes where data are processed locally. As the IoT devices, edge nodes, service instances, and the cloud are connected within the same blockchain network, trustworthy data transfer and information exchange are assured by a consensus mechanism used in blockchain technology. The MEC-enabled blockchain for IoT slicing and task offloading shown in Figure 12 supports information and data storage and distribution to the connected blockchain entities.

Conclusion

With a multifold increase in the number of IoT devices in billions supported by advances in IoT technology, numerous value-added services are being offered as new possibilities. New technologies such as MEC along with network slicing and task offloading enhance the possibilities for IoT to offer further advanced services in real-time. As the launch of 5G networks has opened new avenues of use cases and business opportunities by offering low latency and ultra-high reliability among other advantages, the conventional IoT service platforms in the cloud may still fall short of providing real-time services efficiently with billions of IoT devices. Comparisons and advantages between server-cloud and serverless cloud computing have been provided followed by a detailed explanation of Container technologies and the concept of IoT service slicing based on microservices. Recent studies have extended the reference architecture of MEC to include containerization toward serverless edge computing successfully combining network slicing and task offloading in the form of improved latency. This has been further enhanced through blockchain at the edge nodes, IoT devices, and service instances using which ensures trustworthy data transfer and information exchange.

Future Work

One of the aspects of future work would be the optimization of Quality of Service (QoS) in terms of latency, security, and range of services at the IoT edges while taking into consideration the network availability, network slicing, and task offloading. Future research should also evaluate the relative performance of the serverless and server-based cloud architecture for Connected Vehicle applications in terms of cost, reliability, RTT, and security in a real-world scenario in different parts of the world, as per 3GPP and ETSI recommended use cases. Another potential opportunity for future research includes a comparison of different commercial cloud service providers such as Microsoft Azure, GCP, and AWS while applying different use cases on connected vehicle applications in real-world traffic and driving conditions. The use case evaluation on connected vehicles should also include exclusive tests on cybersecurity in connected vehicle applications with serverless computing at the edge nodes such as identity protection, data privacy, authentication, data integrity, unauthorized data publishing, etc. Future work may also include variability of network connections through drones or unmanned aerial vehicles in remote areas to study the impact on QoS of connected vehicles especially augmenting Advanced Driver Assistance Systems (ADAS) such as collision avoidance, and frontal collision warning, lane departure warning, etc. The dynamic management of containers by using AI technologies and comparison of virtualization of microservices at the edge nodes based on effective containerization is also another potential area of future research.

References

1. K.L. Leuth, the State of IoT in 2018: Number of IoT Devices now at 7B – Market Accelerating, Aug 8, 2018. https://iot-analytics.com/state-of-the-iot-update-q1-q2-2018-number-of-iot-devices-now-7b/

2. Datatronic, How does containerization help the automotive industry? What are the advantages of Kubernetes rides shotgun?

3. S. Rac and M. Brorsson, At the Edge of a Seamless Cloud Experience, https://arxiv.org/pdf/2111.06157.pdf

4. J. Napieralla, Considering Web Assembly Containers for Edge Computing on Hardware-Constrained IoT Devices, M.S. Thesis, Computer Science, Blekinge Institute of Technology, Karlskrona, Sweden (2020).

5. B. I. Ismail, E.M. Goortani, M.B. Ab Karim, et.al., Evaluation of Docker as Edge computing platform, 2015 IEEE Conference on Open Systems (ICOS), 2015, pp. 130-135,

https://doi.org/10.1109/ICOS.2015.7377291

6. A. Ksentini and P. A. Frangoudis, Toward Slicing-Enabled Multi-Access Edge Computing in 5G, IEEE Network, vol. 34, no. 2, pp. 99-105, (2020), https://doi.org/10.1109/MNET.001.1900261.

7. L. Tang, B. Tang, et al. Joint Optimization of network selection and task offloading for vehicular edge computing, Journal of Cloud Computing: Advances, Systems and Applications (2021) https://doi.org/10.1186/s13677-021-00240-y

8. H. Zhou, H. Wang, X. Chen, et.al, Data offloading techniques through vehicular Ad Hoc networks: A survey, IEEE Access, vol. 6, pp. 65250-65259 (2018), https://doi.org/10.1109/ACCESS.2018.2878552.

9. H. -W. Deng, M. Rahman, M. Chowdhury, M. S. Salek and M. Shue, Commercial Cloud Computing for Connected Vehicle Applications in Transportation Cyberphysical Systems: A Case Study, IEEE Intelligent Transportation Systems Magazine, vol. 13, no. 1, pp. 6-19, (2021), https://doi.org/10.1109/MITS.2020.3037314.

10. A. Wang, Z. Zha, Y. Gui, and S. Chen, Software-Defined Networking Enhanced Computing: A Network-Centric Survey, Proceedings of the IEEE, vol. 107, no. 8, pp. 1500-1519, (2019), https://doi.org/10.1109/JPROC.2019.2924377

11. M. Malawski, A. Gajek, A. Zima, B. Balis, K. Figiela, Serverless execution of scientific workflows: Experiments with Hyperflow, AWS Lambda, and Google Cloud Functions, Future Generation Computer Systems, Science Direct, vol. 110, no. 8, pp. 502-514, (2020),

https://doi.org/10.1016/j.future.2017.10.029

12. L. F. Herrera-Quintero, J. C. Vega-Alfonso, K. B. A. Banse and E. Carrillo Zambrano, Smart ITS Sensor for the Transportation Planning Based on IoT Approaches Using Serverless and Microservices Architecture, IEEE Intelligent Transportation Systems Magazine, vol. 10, no. 2, pp. 17-27 (2018), https://doi.org/10.1109/MITS.2018.2806620.

13. S.V. Gogouvitis, H. Mueller, S. Premnadh, A. Seitz, B. Bruegge, Seamless computing in industrial systems using container orchestration, Future Generation Computer Systems (2018), https://doi.org/10.1016/j.future.2018.07.033

14. J.Y. Hwang, L. Nkenyereye, N.M. Sung, et.al., IoT service slicing and task offloading for edge computing, IEEE Journal, 44, 4, (2020).

Author:

Dr. Arunkumar M. Sampath

Principal Consultant

Tata Consultancy Services

Dr. Arunkumar M. Sampath works as a Principal Consultant in Tata Consultancy Services (TCS) in Chennai. His interests include Hybrid and Electric Vehicles, Hydrogen & Fuel Cells, Connected and Autonomous Vehicles, Cybersecurity, Functional Safety, Advanced Air Mobility (AAM), AI, ML, Agile SW Development & DevOps, 5G, Edge Computing, Containers, and Data Analytics.

Published in Telematics Wire