Ford offers multi-seasonal self-driving data to academic and research community

Every second a self-driving vehicle is operating, it’s gathering information about the world around it. Cameras and LiDAR help it identify vehicles, pedestrians, signs and anything else that might be out in or near the streets. Radar helps the vehicle keep track of how fast things are moving around it.

Without all this data, self-driving cars wouldn’t even be able to leave a parking lot. These vehicles need to process a constant stream of information to navigate their surroundings in a safe manner, but even before they can do that, high-quality data is needed to help engineers and researchers create software that can properly teach self-driving vehicles how to analyze their environments.

To further spur innovation in this exciting field, Ford is releasing a comprehensive self-driving vehicle data package to the academic and research community. There’s no better way of promoting research and development than ensuring the academic community has the data it needs to create effective self-driving vehicle algorithms.

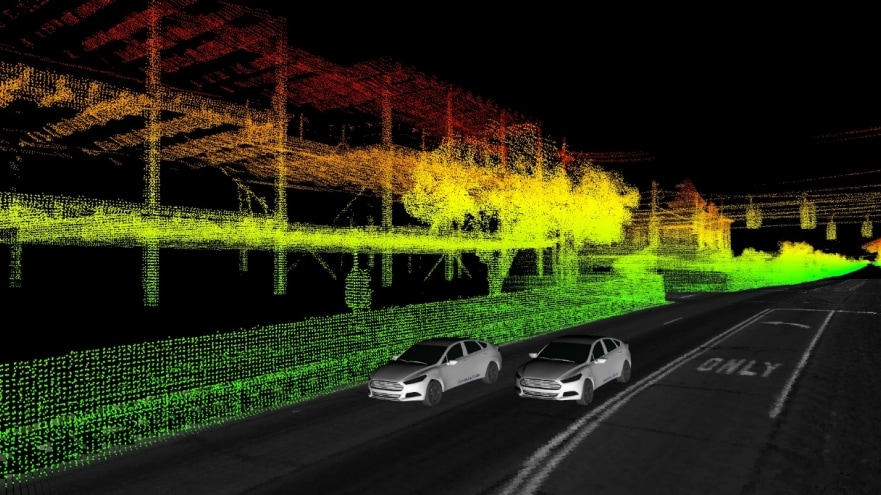

As part of this package, Ford is releasing data from multiple self-driving research vehicles collected over a span of one year — part of advanced research efforts separate from the work we’re doing with Argo AI to develop a production-ready self-driving system. This dataset includes not only LiDAR and camera sensor data, GPS and trajectory information, but also unique elements such as multi-vehicle data and 3D point cloud and ground reflectivity maps. A plug-in is also available that can easily visualize the data, which is offered in the popular ROS format.

There are a number of reasons why these data points are noteworthy to researchers.

Since this dataset spans an entire year, it includes seasonal variations and varied environments throughout Metro Detroit. It features data from sunny, cloudy and snowy days, not to mention freeways, tunnels, residential complexes and neighborhoods, airports and dense urban areas. Toss in construction zones and pedestrian activity, and researchers now have access to diverse scenarios that self-driving vehicles will find themselves in, helping them design more robust algorithms that can account for dynamic environments.

Most datasets only offer data from a single vehicle, but sensor information from two vehicles can help researchers explore entirely new scenarios, especially when the two encounter each other at different points along their respective routes. Right now, one vehicle has limited “vision” in terms of what it can see; you’ll note in our visualizations that some parts are not colored in, which is because the vehicle’s sensors could not penetrate those areas. But with multiple vehicles in the same general area, it’s feasible one would detect things the others simply cannot, potentially opening up new routes for multi-vehicle communication, localization, perception and path planning.

To aid this endeavor, this set includes high-resolution time-stamped data from our vehicles’ four LiDAR and seven cameras that can help researchers explore solving perception problems — improving the ability of a self-driving vehicle to recognize and identify people, places and things in its environment. Precise localization and ground truth data allows researchers to see exactly how accurate their algorithms are, giving them a baseline for performance they can measure against in their own research.

In addition to releasing 3D point cloud maps from our LiDAR, we are also giving the research community access to high-resolution 3D ground plane reflectivity maps. Together, these maps give researchers a comprehensive understanding of what our self-driving vehicles “see” in the world around them.

The whole point of this effort is to not only improve the way self-driving vehicles navigate their environment and interact with personal cars, pedestrians and other self-driving vehicles, but also to support the next generation of engineers. Offering researchers a comprehensive package of information will enable them to create advanced simulations based on real data — and we are excited to see how this will all be used.