Building a Scalable ADAS Solution for an Emerging Market

As vehicle manufacturers look to implement advanced driver-assistance systems (ADAS) to save lives and meet government regulations, they want to use cost-effective yet reliable technologies that create a foundation for today’s ADAS capabilities and scale to support more sophisticated capabilities in the future.

ADAS has been proven to reduce the number of vehicle crashes. One of the fastest growing ADAS features is automatic emergency braking (AEB), where the vehicle detects whether it is closing on an object too quickly and engages the brakes if the driver does not. The U.S. Insurance Institute for Highway Safety says that AEB can reduce front-to-rear crashes by 50 percent.

Emerging markets such as India are also moving towards ADAS technology deployment in vehicles. Automakers in India have requested the Central government to open up a spectrum band that will enable them to offer solutions to enhance passenger and pedestrian safety via satellite. This has definitely paid off, and now the government has approved the use of very low power radio frequency devices – such as short-range radar systems – and freed up the 77 GHz frequency spectrum to be used by the auto industry. The entire industry, and more so the consumers in India, will definitely benefit from this move.

With AEB’s effectiveness demonstrated around the globe, the government of India is also helping to ensure that new vehicles ship with the technology by 2023. Major OEMs in India are already getting ready to move beyond AEB, with plans to include Level 2 and Level 2+ ADAS functionality in their vehicles in 2021.

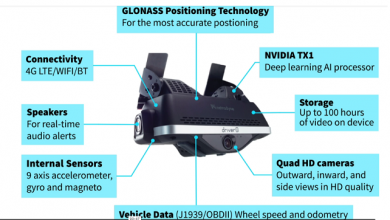

To enable AEB and other ADAS technologies, radars provide reliable, foundational sensing capabilities. They not only can tell how far away an object is – up to as much as 500 meters with long-range automotive radar – but in the same measurement they also determine how quickly that object is moving toward or away from the vehicle.

Radars use radio waves to “detect” those objects, which means they work well in a variety of weather conditions, including rain, snow, fog or smoke. Unlike cameras, radars do not require a clear line of sight, so they are not affected by dirt or grime. In fact, a radar can be hidden out of sight behind a panel or the grille and reliably deliver accurate information about the environment around the vehicle.

Cameras are also cost-effective, but they have to try to estimate an object’s distance by looking at the size of the object in the camera’s image, their range can be limited by the camera resolution, and they have to estimate speed by looking at multiple image frames.

However, cameras are excellent at classifying objects – that is, being able to discern between, say, a pedestrian and a bicyclist – and they can even read street signs. Each object or road user will behave differently, so an intelligent system could better anticipate those objects’ movements if it knows what it is looking at.

Lidar can measure distances accurately, and its high resolution allows it to determine the edges of objects precisely. However, lidar is very expensive, and, like cameras, it requires a clean and clear surface in front of it to be effective.

Sensor fusion

The main sensors in use today on vehicle are radar and cameras, with ultrasonics playing a role in short distances at low speeds and lidar used in autonomous driving.

The key, then, is to take the best attributes of different sensing and perception technologies and combine them. As vehicle manufacturers look beyond the simplest ADAS functions to Level 2 and 3 functionality, this sensor fusion will be critical to building a durable environmental model.

In the past, manufacturers packaged processors with the sensors themselves. The processors would analyze the data coming from the sensors and track any objects they had identified. Now, many OEMs are looking at removing the processing power from all of those sensors and consolidating it into a centralized domain controller.

This approach makes the sensors smaller and lighter. In this satellite architecture, radars, for example, are up to 70 percent smaller than smart sensors. Power supplies, housings and brackets are reduced or eliminated, resulting in an overall mass reduction for the ADAS of 30 percent. OEMs have more flexibility in where they place the sensors, and heat management becomes less of an issue.

The centralization also helps enable low-level sensor fusion. Instead of each sensor individually processing data and forwarding it on, the data from multiple sensors and sensor modalities is combined in a single step. This reduces latency and allows the ADAS system to make decisions faster.

With the right data, that centralized domain controller can also apply machine learning to get more out of it. For example, with machine learning, a system can better identify and classify objects from radar data. Machine learning allows the system to see pedestrians in a cluttered environment. It allows the system to identify vulnerable road users such as bicyclists and motorcyclists, reducing misses by 70 percent compared to classical radar signal processing. Further improvements are possible by combining the data with that from other types of sensors through sensor fusion.

The result is a robust environmental model that becomes the basis for higher levels of automation, giving the system the information it needs to make intelligent decisions. Such reliability is necessary for advanced features such as highway assistance or traffic jam assistance.

Each innovation builds on the next. OEMs can start with a smart radar to meet regulatory requirements for AEB today, while planning for a satellite architecture that leads to centralized intelligence for more advanced functions. The approach allows them to add new sensors easily, and add new, differentiating ADAS functions to their vehicles at their own pace. In this way, OEMs can democratize safety while moving to advanced capabilities for safety, comfort, convenience and automated driving as consumers demand them.

While the Indian ADAS market may seem to be in its early stages, with only a small percentage of vehicles fitted with Level 1 or Level 2 autonomous driving features, in fact the ADAS market in India is responding rapidly to the need for safer driving conditions.

India as an emerging economy is on the right path to speed up the widescale adoption of ADAS. Next, it must build up the IT infrastructure on highways and implement stricter regulations for road safety. Technology development and innovation continue at a rapid pace within the auto industry, allowing companies to gain a first-mover advantage in this emerging market.

Author:

Santhosh Brahmappa is site director at Aptiv Technical Center India, which develops multiple products and technologies enabling the future of mobility. He is a seasoned business leader with global engineering responsibility, driving transformation through software enablement, building talent for automotive software and driving technology practices across the globe. He has diverse industry experience across verticals, including automotive, software products, industrial automation and medical devices.

Published in Telematics Wire