Decoding Sensors in AD/ADAS

Growth in AD and ADAS Vehicles

Total economic impact of the Automotive Industry to the Global Economy is reported to be 3% of global GDP. In emerging markets like India and China it is around 7%. For a long time, cars and vehicles were about mobility, thrill of ride, status, and style. In past decade and a half, the automotive industry’s imperative has changed drastically. The industry has become a hotbed of innovation, and creation of exciting products and solutions. The sectors that were not part of automotive ecosystems are now integral to it, for example, electronics, software, etc.

One of the key features that is driving innovation is Autonomous Driving; understood to be the situation where car fully drives itself in any conditions, anywhere. While it is not common yet, we already have several automation features that assist the drivers and that is known as Advanced Driver Assist System.

AD/ADAS market is expected to grow at a CAGR of approx. 70% to reach 6.9 million units by 2030. So far, the major use case for Autonomous Driving have been individual passenger cars, however there are several other applications envisioned for Autonomous Driving technology such as:

- Self-owned Passenger cars

- Trucking

- Taxi, Buses, Delivery Services

- Autonomous Flying

- Farming and Agriculture

- Construction and Mining

- Logistics

- Platooning

Today’s AD/ADAS vehicles provide far more security than human driving. There is an ongoing debate on the way automation is implemented to keep it to the human limits or to extend beyond. Several luxury car OEMs are already providing features beyond human perception like rear parking view. Major benefits of AD/ADAS technology across industry are:

- Safety. It is predicted that by 2050 when fully automated driving will be the preferred driving mode, there will be a drop in accidental deaths by 90%.

- Mobility to disabled and elderly persons.

- Better lane capacity utilization.

- Better Fuel economy.

- Reduction in emissions. Due to better driving behavior, and due to better lane capacity utilization emissions are estimated to be dropped by 60%.

- Accuracy and productivity benefits in industries like mining, agriculture, etc.

Level of Automations defined for Automated Driving

Globally accepted levels of automations are defined by SAE which are used by OEMs, suppliers, and governments to have common understanding while advertising, designing, policy making etc.

Level of Driving Automation described by SAE J3016

Sensors in AD/ ADAS technology

From sensors point of view, AD/ADAS technology is enabled by Perception and Reaction sensors. Reaction sensors like pressure sensors are the biggest gainer in terms of usage over the years and its market size is going to grow more than any other. These are actuators that enable action based on instruction from car’s computing unit.

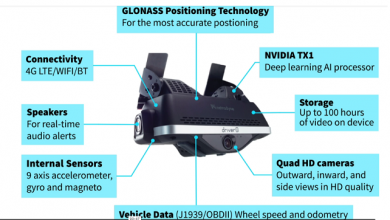

AD/ADAS’s perception layer needs inputs of the environment around the car and it has been the biggest challenge to achieve during the development of the autonomous driving. These sensors provide environmental information around the car to the perception unit that combines the information and sends to the vehicle control system. While human driver uses only eyes and ears as major perception sensors, the brain can process that information in many ways that only camera and mic in autonomous vehicles could not have. Here AI within drive processing comes in play.

There are different types of sensors used in ADAS primarily based on their strengths and weakness to provide input to the vehicles’ perception layer under various conditions, at various speed of vehicles, speed of communication limits, reliability, ability to capture different parameters and at different distances.

These are sensors based on the functions required in the AD/ADAS vehicles are

- Camera

- Long Range Radar

- LIDAR

- Short and Medium Range Radar/Sonars

- Other

- GNSS

- IMU

Sensors have advanced in their ability to gather information at speed, reliability, and accuracy over the years. Some OEMs focus on one type of Sensors then others. Also, Sensor Fusion, i.e. combining the data from all sensors and then computing the controls is continuously evolving. Artificial Intelligence has played a huge role in identifying the road conditions and interpreting them accurately. Distance of the objects from the vehicle plays the primary role in picking up the Sensor type utilized.

Tesla famously tends to depend on camera more based on their AI programming to accurately perceive the road conditions supported by Sensors. Their arguments have been that Autonomous Vehicles only need sensors as much as humans can perceive after all that is all we do in a human driven car today. On the other hand, if there is an opportunity to improve safety and gain other benefits then over a period the higher cost of other sensors may not hurt the overall to individual owner and the industry.

Radars are other set of sensors that are used extensively. Which ones can be used depends on distance, speed, overall architecture of interpretation and the goals of the Autonomous Driving.

Data collected by sensors is also being used for training machine learning models and development of better interpretation and handling the AD/ADAS system.

Ultrasound

Short range sensing can be done through Sonar/Ultrasound sensors it is accurate and has small form factor and are cheaper relatively.

Radar

For medium range, AD/ADAS vehicles use Radar because Sonars cannot be used for navigation due to their short range. Radar however can be used widely for navigation, and they are not impacted by bad weather, fog, etc.

Camera

Camera is relied upon the more naturally due to the parallel with human eye. However, there are multiple cameras positioned at different places in the vehicle and then their inputs are combined to provide overall picture labeled with the object identifications for the AD/ADAS system like pedestrian, sign board other cars.

Poor weather and low light conditions are obvious challenges for Cameras.

LIDAR

LIDAR provides the highest range and 3-dimensional object recognition along with velocity and surface reflectivity with recent developments, but it is more expensive, and some OEMs have concerns that with LIDAR rain, dust etc. can cause interference. Nevertheless, LIDAR manufacturing companies and OEMs continue to have join ventures and develop further as one of the most reliable sensors. Recent development in LIDAR is Solid State 5D technology that provides 3-dimensional position, velocity, and surface reflectivity which in turn provides highly accurate object identification at the vehicle’s speeds of up to 500Km/h.

LIDAR is not impacted by darkness, sunlight etc. due to its reliance on the low intensity laser. It also means that LIDAR requires an energy source higher than other sensors. There are two types of LIDAR sensors used today, one that use “Time of Flight” mechanism to identify the 3D location and velocity and the others are FMCW (Frequency Modulation Continuous Wave) that uses solid state array. There are contrasting views with respect to the application, speed, accuracy and ability to measure the velocity and lateral motion detection to choose from one to another, but it is observed that there are more advancements in solid state LIDARs improving likelihood of lower cost and smaller form factor.

Path of evolution for sensors

LIDAR is used by more OEMs today for AD/ADAS because of its accuracy, speed and details. It is however more expensive and larger in size despite reduction in size in recent times. Compute devices and platforms also need to modify every time there is a major advancement in sensor capability. Major computing platforms like NVIDIA Drive PX2, NVIDIA Drive AGX (SoC – System on a Chip), Texas Instruments TDA3x, Mobileye EyeQ5, Google TPU v3 are some of the computing hardware that utilize the sensor data.

Some factors are important to consider while predicting the future of sensors and their computing systems in AD/ADAS.

Cost

There is an additional cost for the consumer and OEM for AD/ADAS vehicles. In post COVID-19 era, when supply chain is struggling to get back on its feet, record high inflation is perilously reducing the margins, and pressure on sourcing due to growing geo-political tensions among other factors are an impediment to the innovative technology. Specifically for sensors, electronics industry has been joining hands with Automotive and Automotive ancillaries’ are still betting big on continuous innovation and scaled production to reduce cost to the car.

Cybersecurity & privacy

The amount of data sensors generated can go up to 4000 GB per day according to intel’s reports. Controlling vehicles through data processing and computing has an inherent risk of cyberattacks. The impact can be from the data privacy to the serious take over the control in the uses like platooning.

Protecting investment from new disruptive tech

High additional cost and need for accuracy and efficiency in the ongoing early levels of automated vehicles is driving continuous innovation and changes to the sensors and sensor fusion industry. Every 6 months there is a new generation or new products announced and new JVs, mergers and acquisitions announced. To the Automotive OEMs keeping up with the tech during Product Lifecycles is going to be a constant challenge for a few more years to come.

Energy consumption

Sensors can cause direct and indirect energy consumption. In the times of EVs and FCEVs (Fuel Cell Electric Vehicles), economics of energy consumption becomes very important. To process 8k video, 5-7D Lidar, GPS, Radar data in real time the single SoC unit may need to deliver up to 320 trillion operations per second and can only be optimized up to 500 watts.

Social and government posture

Alliance for Automotive Innovation in the USA recently release a recommendation to the Department of Transport focused on Autonomous driving that recommends law modification focus on vehicles assuming there are no human drivers. Similarly, the human drivers need to evolve to understand how to deal with a car without drivers and in some cases even without any human passengers. Industries like transportation, insurance, emergency service providers will need to extend their thinking to handle the autonomous cars on the road and also to standardize data points to process claims, provide service etc.

Summary

Only a few decades back, it was impossible to imagine that Cars driving themselves is even a possibility. There are L4 cars on the road. ADAS has already changed the way consumers look at driving. It does have challenges from regulation, cost, electronics and other dependent industries.

Despite all its challenges, there is no stopping AD/ADAS adoption to higher levels of automation and with that innovation and product rollout is continuously being observed in the market. Sensors market is flooded with startups and new age companies and over time some have been acquired by big tech and auto companies as well. Global sensors market is likely to grow by around 7% CAGR between 2021-2028 and it would mean that economy and innovation around it is not relenting any time soon!

Authors:

Saurabh Chaturvedi

Delivery Project Executive: Automotive Complex Program Manager: Hybrid Multicloud

IBM Consulting

Saurabh is IBM Certified Delivery Project Executive for Automotive clients in IBM Consulting – India. He has led several IT and Consulting engagements for Automotive clients for over 20 years. Through his expertise in Automotive Industry, he has led technology transformations to maximize client’s business value from their strategy implementation in Digital, Cognitive, and IoT.

Sankalp Sinha

Business Development Executive – Automotive Aerospace & Defense, CIC India

IBM Consulting

Sankalp is the Business Development Executive for Automotive Aerospace & Defense Industry, for IBM Consulting- CIC India. He also leads Global Auto Aerospace & Defense Knowledge Management. A subject matter expert in Automotive with over 12 years of experience, Sankalp holds a MBA in Operations Management from SPJIMR, Mumbai and holds an advance degree in Integrated Supply Chain Management from Eli Broad School of Business, Michigan State University, USA.

Published in Telematics Wire