Fueling IoT with Big Data

Cyber Security Ventures

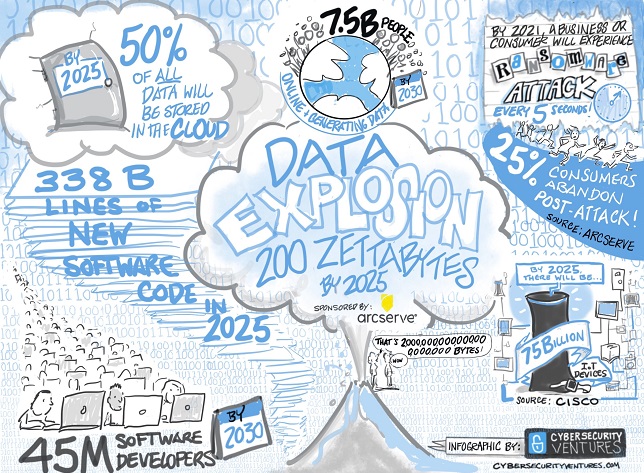

With the rapid deployment of 5G around the globe and a prediction of 127 Billion connected devices by 2030, there is a lot of investment and efforts being put by businesses towards IoT. To put this into perspective, given that the world population is expected to reach 8.5 billion in 2030 and the connected devices to reach 127 billion would mean around 15 connected devices for every person. The decade of IoT has just begun and industry experts call IoT as the next big industrial revolution named as Industry 4.0 which is expected to be much bigger than any of the previous industrial revolutions.

Better predictability, cost savings and efficiency in operations are few of the many offerings being presented by Big Data, combined with IoT. This deadly combination can be a game changer for many businesses in Industry 4.0, specifically in fields like Logistics, Health care, Mobility where a lot of efforts and money are being spent in optimization and automation. Currently 82% of industrial corporations are either using IoT or running a pilot or planning to adopt it in the near future.

IoT acts as a feeder network to the ocean of Big data. Once the data is fed into the data lake, telemetry data combined with other data which were in silos, together will raise the data value exponentially. This data when processed appropriately creates huge value and context for the businesses as it can be used as an information capable of creating insights for better decision making hence making it data driven than biases.

Only 15% of the data is being utilized effectively today, rest is just a heap of data lying around. Hence it’s not only important to gather data, but it’s important to have smart data collection and make the most use of the available data. This further shows how much potential is thus available in Big data that can be explored using IoT.

IoT and Bigdata strategies for your business

IoT strategy

With numerous IoT and data platforms out there, it might be overwhelming to choose the right platform and design the IoT strategy.

These are some of the questions which will give a clarity while making the choice of the right platform for your business:

- What’s the problem that I want IoT to solve for my business

- What are my device profiles and what protocols would they speak

- Would I need Edge, or my devices can directly communicate with the cloud

- Scale, number of devices and data volume being collected

With the answers to these questions and main priorities for your business, helps you converge towards picking the right IoT platform. If a platform completely satisfies your needs, that would be a perfect, else necessary tradeoffs must be made to select the best fit in the available choices.

Data strategy

Once the IoT strategy and platform is decided,the next decision must be about the data which the platform has collected, data strategy.

Some of these questions would ease your decision making process:

- What is the nature of the data – Media (Audio/Video/Images) or Text

- How quickly are data insights required- Real time or Batch

- Data quality – what data is good data

- How would the data be consumed- Analytics? Machine learning? Monitoring/Alerting?

- Who are the stakeholders consuming the data

- How long the data is required

With these questions answered, it will be easier to formulate the data strategy for your IoT system.

Finalizing these fundamental requirements are very important and might take from weeks to months. But this strategy must be extensible to accommodate your business expansions in the future.

Important aspects

Connectivity and QoS

With the devices on the field and a variety of devices speaking various protocols like MQTT, Websockets, Lorawan, Zigbee, bluetooth, connectivity forms the core of the IoT platform. The connectivity is managed at the edge for edge local protocols and some devices directly connect to the cloud with IP based protocols like MQTT and Websockets.

Since the devices use a wide range of connectivity from 2G to 5G and portable devices remain in motion, devices often use flaky networks to communicate with the cloud. With these flaky networks and frequent network glitches, the Quality Of Service (QoS) is very important to be maintained during the message exchange between the cloud and the Edge/Device. Some IoT protocols like MQTT ensure the QoS with various delivery guarantees to ensure reliability of message delivery even in flaky network conditions. Following are the 3 QoS levels ranging from 1 to 2:

- 0 (ATMOST_ONCE)

- 1 (ATLEAST_ONCE)

- 2 (EXACTLY_ONCE)

Edge data analytics

IoT Edge systems help in de-centralizing the data processing from cloud to on-premise devices. Edge can be a small device with 500KB memory to as big as a Desktop with 8GB memory. Edge data analytics will ease the IoT ecosystem in several ways.

- Data local decisions: Faster response time on critical events by avoiding dependency on the cloud and network for important decisions which can be taken locally. Edge devices play a very crucial role in events like a car accident, where response time is mission critical.

- Local data aggregations: Reduces the raw data being sent to the cloud and saves network bandwidth and cloud load. The aggregations can be dynamic and cloud can push aggregation policies to the edge dynamically.

- Consistent uptime: Avoid higher dependency on the network or cloud and avoid the system downtime when either of these are affected.

- Data security: Avoid sending sensitive data over to the cloud with local decision making. Edge devices can also do data masking, which is masking of PII data before sending to the cloud.

- Crucial: For off the grid IoT systems like Oil fields, remote installations.

Since the edge systems have smaller computing power, edge data analytics and processing must be used judiciously.

Data analytics stages

Once the data is in cloud, the data needs to go through different stages

- Data Validation and Filtering – Filtering the invalid data and avoiding corrupted data entering the system. Filter only the chosen data streams to be processed in the next stages.

- Data enrichment or Preprocessing – The raw filtered data is enriched with various information like replacing zip codes in the data with richer location information, adding complete user information based on the user IDs. This process is also called data flattening, where all the necessary data for analytics is pumped into the record, hence avoiding any joins in the later stages.

- Data processing – All the business logic is applied in this stage and this is generally the most complex one. Based on the need for the real time-ness of data, Data processing can be categorized into real time processing, batch processing and Lambda processing.

Data processing

Real Time processing is for time critical responses and reports. Real Time alerting for vehicles crossing its geofence area or a machine vibrations crossing a threshold are some of the examples. Realtime reporting is required for use cases where business needs to see what’s happening at realtime, like location tracking of automobiles. Real Time processing is generally quick,the analytics or alerting is expected within a few seconds or minutes of the data generation at the device. Frameworks like Apache Flink, Apache Storm, Spark Streaming, Heron help in real time processing.

Batch processing is for more time taking or complex processing. This might include deeper, more accurate analytics. Like trends and analysis across various time periods, anomaly detection, rule based models, data preparation for machine learning models. In real time processing, preference is given for speed rather than accuracy while in batch processing, accuracy is given more precedence. Frameworks like Apache Spark, Apache Tez help in batch processing.

Lambda processing Generally the processed data for realtime and batch would be separately consumed by the consumers. Lambda is a hybrid approach, where the realtime processed data is augmented with the more accurate batch processed data. Hence the user gets a wider view of realtime and batch data at the same place. Frameworks like Apache Druid, Pinot by Linkedin help in Lambda processing.

Complex event processing (CEP)

The processing of data can soon get very complex with the different incoming data streams, a variety of actions available on the data and different processing preferences for stakeholders of the data. With self-serve CEP platforms like Sentienz Akiro IoT, the control can be given to the business users to easily create what they want to do with their data. Below is a sample CEP pipeline created by one of the users.

Making the platform self-serve is very important for the scalability of your IoT solution. Different stakeholders of the data can manage their data and create pipelines and reports easily. It would increase the adoption of your IoT data many folds.

Machine learning and Deep learning

This is one of the main value additions from an IoT platform which can be an article by itself. Machine learning can detect patterns in the data which otherwise might be very difficult to figure out like anomaly detection, predictive maintenance and optimized energy consumption recommendations.

Deep learning is the next frontier of machine learning. Deep learning imitates human learning with Artificial Neural Networks (ANN) and Convolutional Neural Networks (CNN). These were earlier used in image pattern detection and processing and now are being used on IoT sensor data as well. Deep learning can detect advanced patterns, help in video and audio processing and also aid in advanced decision making like Autonomous cars, and use cases like intrusion detection, recognising human behavior, power demand forecasting, Path planning.

Conclusion

Big data and IoT are 2 behemoths. When these 2 combine, the possibilities are immense. And they need to be planned and executed meticulously, else things might get messy very soon, especially with the scale and the complexity of the platform. Based on our experiences in IoT and Bigdata, this article is a small attempt to help businesses for easier onboarding onto IoT and Bigdata.

References

- https://sst.semiconductor-digest.com/2017/10/number-of-connected-iot-devices-will-surge-to-125-billion-by-2030/

- https://www.zdnet.com/article/survey-industrial-iot-deployment-thriving/

- https://cybersecurityventures.com/cybercrime-infographic/

- https://www.sciencedirect.com/science/article/pii/S1877050919321593

- https://theakiro.com/

- https://www.computer.org/publications/tech-news/research/deep-learning-iot-frameworks-next-generation-mobile-apps

Author:

Ravi Teja Chilukuri

CTO and Akiro Product Head

Sentienz

Ravi is the CTO of Sentienz,he also leads Sentienz Akiro, an IoT platform of Sentienz. Earlier Ravi has been with Flipkart, Paypal and Huawei, building internet scale platforms and a contributor in Apache Hadoop (YARN, Tez, Mapreduce), Kafka. He’s passionate about Distributed systems, IoT and Big data.

Srikanth GN

CoFounder and Architect

Sentienz

Devout product innovator and a technical leader turned into an Entrepreneur with focus in Media,Mobility, Telco-IoT, Smart home and Connected device technologies.

Published in Telematics Wire