Changing face of Telematics with Autonomous Vehicles Technology Stack

Telematics – the technology of sending, receiving and storing information using telecommunication devices to control remote objects, the integrated use of telecommunications and informatics for application in vehicles and to control vehicles on the move, global navigation satellite system technology integrated with computers and mobile communications technology in automotive navigation systems. These are all typical definitions from Wikipedia. The industry of telematics emerged with the emergence of the telecommunication systems with 2G and GPS based positioning to share location details of a vehicle. The world today in 2021, has seen Google Waymo, Tesla Autopilot, Cruise, Comma.ai, Voyage like companies putting the whole GPU systems in the vehicle where the vehicle is taking all the decisions all by itself. The GPS based location tracking and Autonomous Vehicles, are two extremes of vehicular technologies. And this is a very strange situation and opportunistic in a way, where 90% of the commercial trucks are not even using Telematics and the world has reached to Level-5 Autonomy. The interesting thing to consider here is, how do we bridge the gap and how do we emerge in the age of advanced technology and communication.

The road to Autonomy and Level – 5 like autonomous systems are not going to come suddenly, it’ll be a journey, a long journey of transformation of technological adoption for commercial vehicles. The journey of vehicular telematics has started from GPS based navigation systems which is now a mandate in India for commercial vehicles and many other countries globally. The next improvement which was introduced was OBD – II based engine diagnosis along with location-based settings. With OBD-II based devices, the visibility of the vehicle being monitored improved a lot and it led to predictive maintenance which involves lots of data science and machine learning technologies. This led to requirement of high computing over the cloud/local servers for running heavy learning algorithms to derive predictions. This was achieved around 2006-2008 in US and around 2010 in India where Indian vehicles started coming with OBD-II port in the vehicles which supported J1939 and other related software stack for reading the data from the engine of the vehicle. With the OBD-II based devices which were able to get a picture of driving behavior and resulted in better fleet management systems.

The new era started in vehicular telematics when cameras joined the race as contextual information started with dashboard camera systems, which were able to record videos and store it in the device itself. Lot’s of people started using CCTV solutions in heavy vehicles for surveillance purpose. The need of the visual information started making a space in the market but there were not many of the solutions/products available in the market and those available were not affordable in the Indian context. The other most important aspect in the telematics industry is the communication medium, starting with 2G enabled devices started throwing data for OBD-II & GPS based systems. For the telematics industry to grow, there has always been a trade-off between the performance & price. If you want more features, there’s a cost to pay per vehicle, but there’s very little room available for high-end technical products to outshine old ones as they are little more expensive and so becomes luxury where older technology is easily available for lower cost. The real challenge for newer technologies to pre-empt the GPS based systems and speed-based analytics which is going on since years now.

With emerging and growing powers of AI/ML & Vision technologies, the face of the telematics is also changing. The biggest backbone of this is growth of autonomous vehicles of L2/3/4/5. Many companies in the USA, China, Germany & Singapore have developed full stack software for autonomous vehicles. A typical autonomous vehicle works on a very simple principle made by three questions:

The first question talks about the localization and the localization can be achieved with GNSS (Global Navigation Satellite System), IMU (Inertial Measurement Unit) & Camera (Lane Position). The second question talks about perception which could be achieved through LIDAR, RADAR & Camera. What kinds of objects do you see, how far are they, what is the criticality if the vehicle moves in a certain direction? The answers to these questions are derived with the help of AI/ML & Computer Vision based algorithms. The third question talks about the decision making and movement. Once you know where you’re, what do you see, and perceive that according to the current situation/location what is the best way to move ahead, what could be the best speed or do I need to brake and stop. The answers to all these questions are then actuated by vehicle central machine and takes the according decision.

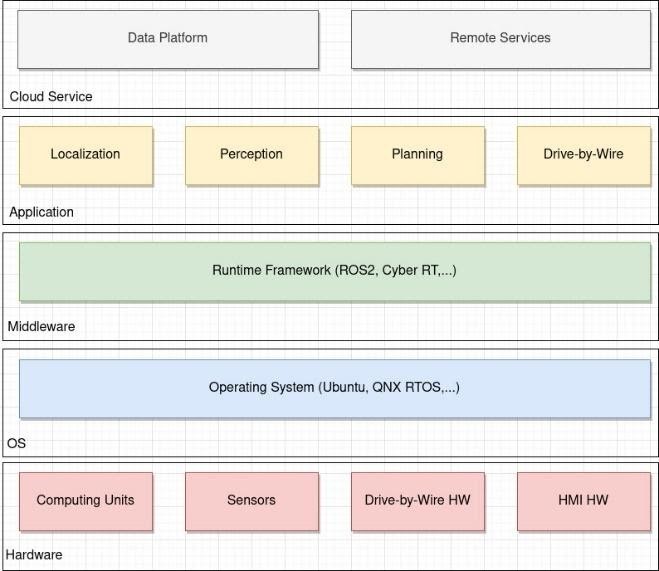

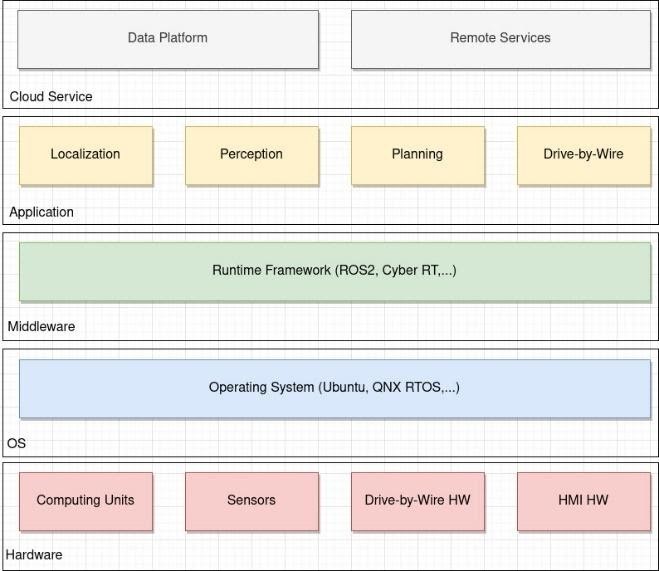

The typical technology stack for AVs is as shown below.

All the layers on the stack, starting from cloud service includes the data management for AI/ML & CV algorithms. The application stack covers the questions of above section, the middleware is ROS like framework for building applications on top of OS layer and then comes the hardware layer which the most critical and deciding factor. Hardware layers is the one deciding measure of how the conjunction of AV technology stack and telematics system happens. AVs are typically highly expensive systems including LIDARs, RADARs, Camera, IMU & storage on board. The decision making for actuating the vehicle happens on the vehicle itself, which requires lighting fast computing and that could be achieved with high performing GPU systems. These systems run AI/ML & Vision based algorithms and take a decision to drive a vehicle.

Interestingly, there are few car makers in the luxury segment of cars, do provide driving assistance systems in the vehicle which is of the kind cruise control, auto braking & traffic jam assist. These systems are called ADAS i.e., Advanced Driving Assistance Systems. These systems are kind of pre-stage of AVs where the drivers will be there always and they are considered as Level 2 autonomy.

Commercial Vehicles in India specifically MCVs & HCVs do not come with any kind of assistive mechanism and while the majority of logistics & fleet industry rely on them. Majority of them are now equipped with GPS based location tracking systems for better fleet management. The high rate of accidents in India & globally when the freight movement has increased the risk has also increased for loss of lives and assets. It’s time for the world to see full autonomy at every corner and it’s a far-fetched goal for the transportation sector where the global ecosystem of driving will be made safer and secure by technology and autonomous vehicles. It is being said that, with the AVs & connected vehicle eco-system, the vehicles will be able to follow one another, would not require any traffic signals & will also upgrade their insurance premiums automatically. These are all good fantasies to watch for in the coming future. The need of the time is to use the software stack of autonomous vehicles which provides/ensure more safer journeys with intelligence and insightful decision making. The systems are comparable with ADAS in luxury vehicles but retrofit, more than typical GPS/OBD/Dashcam based telematics systems. Interesting thing to note is many AV companies have taken all different routes to build AVs and it has been proven that the incremental approach has gain lots of success in achieving self-driving compared to the one which has taken the direct route. The reason behind that is human drivers. The fundamental of AI is to augment the human and help in the decision making process, be it vehicle driving is also about learning from humans and help humans from their driving only. In 2018-19 lots of AV companies which were funded heavily had to shut shop because of no revenue plans in future and it’s all about the technology stack which turned out to be impractical/not so feasible. Many of these companies either got acquired by tech majors to earn valuations or took a step back to use the technologies developed in AVs for ADAS & Telematics products. There are few AV companies who have taken an incremental path towards AV & they are still surviving in the tough market by making money from telematics & ADAS services.

The real path to building AVs is going through the mass adoption of telematics and ADAS in commercial vehicles and then to passenger vehicles. The larger the adoption leads to more data and fine learning for algorithms to make better decisions in unstructured & uncertain environments in future. The key to this is to adopting technology stack of AVs & removing the controlling section from it. And this is how it looks practically,

The removal of Planning & Drive by Wire sections from the application layer & drive by wire HW from the hardware layer makes it a complete advanced technology solution for telematics.

With the time, telematics has also improved with data analytics and machine learning. Location & OBD based systems have started coming up with data dashboards & insights about the fleet & driver performance, route optimization & many more applications and useful insights. Another vertical which gets the benefit out of the data driven fleet and telematics is the Insurance industry. Insurers have always been the evangelists for new technologies and in the case of telematics also it’d be the case in coming times.

Telematics systems today have reached a stage where they can fetch data from OBD-II, GPS, tyre pressure & driver face images. Collecting all these data and applying AI/ML algorithms are used for data dashboards for preventive maintenance, driver incentivization & monitoring. The next advancement in this will be telematics devices with ADAS capability in it. They’ll also possess AI processing power in the devices themselves. These kinds of devices will be retrofitted in any kinds of vehicles. The crucial aspect for this is computing power i.e., GPUs and 2019 was the year which was expected to bring lots of edge computing hardware to the market but due to pandemic, the progress is slow. The edge compute device clubbed with high-speed communication that is 4G for the current time and 5G for the future, will change the face of telematics completely.

The advanced telematics technologies will not only track or monitor vehicle, but it’ll observe the driver, learn from it as well as train them. The data collected from camera systems will help to create context of the situation at any point of time in the driving along with the decision made by driver. The systems will come with active assistance mechanism for drivers to avoid such collision prone situations in case if any arrives and assistance is provided. This will enhance the asset life for any fleet in terms of drivers as well as vehicles. The challenge to make this successful depends on how interactive these devices are, as they’ll interact with the humans who have never interacted while driving with technology and it’ll be a huge psychological shift for commercial truckers to live with the upcoming new technologies. The previous versions of telematics have always seen the hindrance from the drivers as they believe them to be spying on them. Though all the telematics device enhances the visibility for fleet companies and managers but most of the drivers want to get rid of it and there have been cases where drivers have removed and thrown these devices away from the vehicles and that is also the resistance from the fleet fraternity for adoption as losses are high in case drivers do not adopt it.

Developing technology and product is easier than making it adaptable by user and for such advanced technology which is meant to assist and coach their users will have to go through the mindset shift. Once the adoption is there, taking the technology to the advanced level where vehicles can take decisions on-behalf of the drivers become possible. The journey of self-driving will come via commercial vehicles and for that to happen, the OEMs will have to adopt such technologies as retro fitment first and then as OEM fitment. The journey will look like this for context like India looking at current activities and fleet management industry with telematics as below:

To bring AVs on the road and to make machines drive vehicles in difficult uncertain scenarios with existing infrastructure, there will be a need to learn how humans are driving, what challenges they are facing and how they overcome such incidents to avoid accidents or vice versa. This kind of learning will be achieved only through continuous learning from the human drivers and that will come from advanced telematics devices with ADAS capabilities.

The development of the brain to drive vehicles on the road, the journey is not only for engineers but for all the stakeholders and the most important are drivers who’ll bring the change and adoption. The engineering marvel which will make it possible in coming times will be only learnt from human drivers and continuous feedback loop back to the active safety in vehicle which will adapt according to how drivers will drive and this process will continue for a long time considering the infinite numbers of uncertain scenarios to drive and fight of human drivers to swim out of it or sink into it.

Though the world will see autonomous vehicles in coming decades or not, the technology which has been implemented are surely going to open avenues for fleet management, driver management, driver coaching, insurance risk assessment and semi-autonomy with the help of changing telematics.

Author:

Nisarg is an Entrepreneur, Founder and CEO at drivebuddyAI. drivebuddyAI is an intelligent driver management & safety platform for fleets & logistics companies based in Delhi, India. Nisarg is an Electronics Engineer and has vast experience on IoT, AI & CV based products. Nisarg has been successfully driving the company from zero to one and expanding.

Published in Telematics Wire