NVIDIA on "Future Proofing" the car with mobile processors

“To combat any malicious software potentially affecting the safety or control systems of the car, many automakers are taking a sandboxed approach, keeping the infotainment systems separate from other parts of the vehicle. Furthermore, implementing hypervisor techniques enables multiple operating systems to run simultaneously on a single system, separating them in case one has an issue.”– Danny Shapiro, Sr. Director-Automotive | NVIDIA

In the automotive industry, what was recently considered science fiction will become reality in the next few years. Technology is no longer an obstacle to bringing automotive dreams, like the self-driving car, to life. And while it is clear that there is still an enormous amount of work to do as global authorities debate the ethics, legalities, and a myriad of other implications of self-driving cars, they are now on our streets undergoing testing and development. For consumers, this automotive technology revolution will make transportation safer, more convenient, and less stressful than ever before.

Building Blocks for the Smart Car

At the heart of the drive toward the future car are the same technologies and components that made the phone smart: mobile communications, sensors, and processing technologies. Consumers now have extremely powerful computers — with location sensors, cameras, touchscreens, and wireless connectivity — in the palms of their hands, and they want the same experience inside of their cars. The challenge for automakers is not just the integration of these technologies, which has already begun, but how to do it correctly so that the car is able to keep up with the fast pace of consumer technology innovation.

The first area of the car that has experienced a technological makeover is the dashboard. In “first generation” infotainment systems that are now on the road, automakers focused on building a digital screen interface with connectivity and control capabilities for smartphones. While automakers have had mixed results in terms of the consumer success of these systems, it is clear that the digital experience is valued. The lesson learned is that the rapid pace of innovation in consumer technologies, from smartphones to tablets, raises the expectations of car buyers. While automakers have accelerated the pace of their product improvement, these cycles still average two to three years, making it very difficult to maintain up-to-date capabilities similar to what consumers experience in their homes or offices.

The answer for automakers lies in following what spurred the revolution in mobile device growth and innovation: building a highly-capable hardware platform with a flexible operating system that is able to adapt to future needs. This will require the adoption of advanced processing capabilities to deliver experiences such as fast touchscreen response, rich photorealistic graphics, customizable and personalized information, plus room to grow as other capabilities come online during the ownership period.

In addition to the advanced processing in the vehicle for infotainment capabilities, mobile platform developers, like Apple with iOS and Google with Android, are looking to seamlessly integrate their smartphone experience into the car. Communication to the Cloud and to mobile devices will play a valuable role in shaping the future car as consumers expect to be connected and online everywhere they go. In an effort to bring the best of the automotive and technology industries together for a solution, Audi, GM, Google, Honda, Hyundai, and NVIDIA have formed the Open Automotive Alliance (OAA), a global alliance of technology and auto industry leaders which will start bringing the Android platform to cars starting in late 2014.

This alliance will foster the use of Android in automotive applications, building off the success of the operating system in smartphones and tablets, but creating an appropriate interface for the car. The development of intuitive and simple interfaces for interacting with a connected smartphone has been a challenge for automakers, so this collaborative effort is anticipated to be a breakthrough. This organization is expected to grow significantly in the near future.

But whether it is integrating Android into the car or Apple’s CarPlay interface for the iPhone, the fact that more devices are connecting to the vehicle introduces the inherent risk of security breaches. Computer viruses and hacking remain a problem today for desktop computers, so what will it take to make the car immune? To combat any malicious software potentially affecting the safety or control systems of the car, many automakers are taking a sandboxed approach, keeping the infotainment systems separate from other parts of the vehicle. Furthermore, implementing hypervisor techniques enables multiple operating systems to run simultaneously on a single system, separating them in case one has an issue.

Another area that will hugely benefit automakers in their effort to keep pace with consumer electronics is a programmable, or updatable, infotainment system. Whether consumers notice it or not, their smartphones are getting better during the ownership period by receiving over-the-air (OTA) updates. As cars become increasingly connected, OTA software updates become possible, allowing automakers to improve existing in-vehicle features and offer new ones over the course of the vehicle’s life. This, of course, is expected in the consumer electronics world, but until a few years ago was totally unheard of in the automotive sector. Pioneered by Tesla Motors, OTA software updates have enabled the company to add new features while Model S cars sit in their owners’ garages at night, as well as improve some vehicle parameters that may have required a costly recall if similar action was required by a traditional automaker.

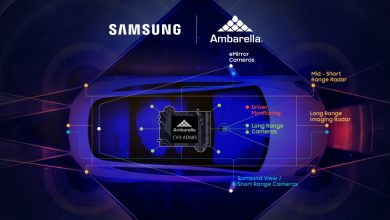

Going beyond the dashboard, the area requiring the most advancement in technology, especially to achieve the vision for the self-driving car, is sensor data processing and decision-making. As more sensors — cameras, radar, laser scanners, and ultrasonic sensors — are added to the car, an incredible amount of data is being amassed every second. To process this information, massively parallel, high-performance processors are required, but they must operate in an extremely energy efficient manner. The architecture that is used for the world’s fastest computers, or supercomputers, which can handle thousands of computation points every second, are needed for these automotive applications while being scaled to an appropriate size and energy efficient package.

Without these three building blocks to the future in play — intuitive user interface, seamless updates, and high powered energy efficient performance — automakers might be stuck with trunks full of expensive desktop computers in their cars, and will never make it out of the world of research and into the mainstream.

Mobile Processors for Autonomous Driving

The seeds of full-scale autonomous driving can already be found in car models today. Various driver assistance features like pedestrian detection, lane departure warning, active parallel parking assistance, and speed limit sign recognition are incremental steps on the way to a full autonomous driving experience.

A key technology at the heart of autonomous driving is computer vision. That doesn’t just mean having a lot of cameras on the car, it means having high performance and energy efficient processors that can analyze the video coming from these cameras. Sophisticated algorithms need to process the incoming information, reported to be as much as 1 gigabyte per second, in real time. To address the increased computation needs of mobile devices (especially cars), NVIDIA recently introduced the Tegra K1 mobile processor. It packs 10 times the computing power of its predecessors and yet still operates in the same power envelope. That’s essential to process all the sensor data that come into play in autonomous driving.

A key technology at the heart of autonomous driving is computer vision. That doesn’t just mean having a lot of cameras on the car, it means having high performance and energy efficient processors that can analyze the video coming from these cameras. Sophisticated algorithms need to process the incoming information, reported to be as much as 1 gigabyte per second, in real time. To address the increased computation needs of mobile devices (especially cars), NVIDIA recently introduced the Tegra K1 mobile processor. It packs 10 times the computing power of its predecessors and yet still operates in the same power envelope. That’s essential to process all the sensor data that come into play in autonomous driving.

With a quad-core CPU and a 192-core graphics processing unit (GPU), Tegra K1 will enable camera-based, advanced driver assistance systems (ADAS) — such as pedestrian detection, blind-spot monitoring, lane-departure warning, and street sign recognition — and can also monitor driver alertness via a dashboard-mounted camera. Utilizing the same parallel processing architecture as used in high-performance computing solutions, the Tegra K1 is the first mobile supercomputing platform on the market.

ADAS solutions currently on the market are based mainly on proprietary processors. NVIDIA Tegra K1 moves beyond this limitation by providing an open, scalable platform. The Tegra K1 processor was designed to be fully programmable; therefore, complex computer systems built upon it can be enhanced via over-the-air software updates. In addition, this sophisticated system on a chip (SoC) can run other apps such as speech recognition, natural language processing, and object recognition algorithms interpreting in real time what is a sign, what is a car, pedestrian, dog, or ball bouncing into the road.

Automakers who are already engaged with NVIDIA and using the visual computing module (VCM) — a highly scalable computer system — for infotainment solutions can easily upgrade their in-vehicle systems with new processors due to the modular approach.

Layered on top of the Tegra processor is a suite of software libraries and algorithms that accelerate the process of creating computer vision applications for different driver assistance systems. Since these systems are software-based, automakers have the flexibility to update these algorithms over time, improving the overall performance and safety of the vehicle. Conversely, fixed function silicon and black boxes delivering solutions for each specific function is an expensive and ultimately dead-end route.

As the graphics on in-vehicle screens improve, personalization of this cluster is also possible. Advanced rendering capabilities on a mobile supercomputer enable in-vehicle displays to rival the visuals created by Hollywood visual effects houses and professional designers. The result is photorealistic content that looks just like real materials, such as leather, wood, carbon fiber, or brushed metal.

At the 2014 Consumer Electronics Show (CES), Audi announced a virtual digital cockpit, powered by an NVIDIA VCM. Inside the next-generation Audi TT, the virtual cockpit display can be adapted to a driver’s needs, displaying the most relevant information at any time, including speedometer, tachometer, maps, menus, and music selections, helping reduce complexity and provide more customization options to its drivers.

NVIDIA has a long-standing relationship with many automakers, including Audi, Volkswagen, BMW, and Tesla. Audi was the first to deliver Google Earth and Google Street View navigation using NVIDIA technology. And during Audi’s CES keynote, after one of their vehicles drove itself onto the stage, the company announced that Tegra K1 will power its piloted-driving and self-parking features currently in development.

Moving Forward with Future-Proof Cars

Given the tremendous increase in computing technology, both from hardware and software perspectives, new challenges have emerged for the automaker. Traditional supply chain models do not work when considering the need for computing platforms and complex software stacks comprised of multiple operating systems, photorealistic rendering, computer vision toolkits, and hypervisors. Only when an automaker has broken the traditional supplier model and instead created a technology partnership can the complex computing systems be developed in a cost-effective and timely manner. Integrating a supercomputer in the car is necessary to achieve the full vision for the future car, especially autonomous driving. A modular approach coupled with programmability enables these systems to rapidly evolve.

It is no secret that car makers put safety at the heart of their strategy. Moving forward, they need a technology strategy that is equally rigorous. And before long, with the right selection of supercomputing technology, we will have self-driving cars on our streets.

About the author

Danny Shapiro is NVIDIA’s Senior Director of Automotive, focusing on solutions that enable faster and better design of automobiles, as well as in-vehicle solutions for infotainment, navigation, and driver assistance. He is a 25-year veteran of the computer graphics and semiconductor industries, and has been with NVIDIA since 2009. Prior to NVIDIA, Shapiro served in marketing, business development, and engineering roles at ATI, 3Dlabs, Silicon Graphics, and Digital Equipment. He holds a B.S.E. in Electrical Engineering and Computer Science from Princeton University and an M.B.A. from the Hass School of Business at UC Berkeley. Shapiro lives in Northern California where his home solar panel system charges his electric car.

[whohit] NVIDIA on “Future Proofing” the car with mobile processors[/whohit]